Control the contextbehind every decision.

Qualytics validates data at the moment it's used, applying data quality as a shared control layer where AI maintains coverage and your teams govern what trusted means.

The Problem

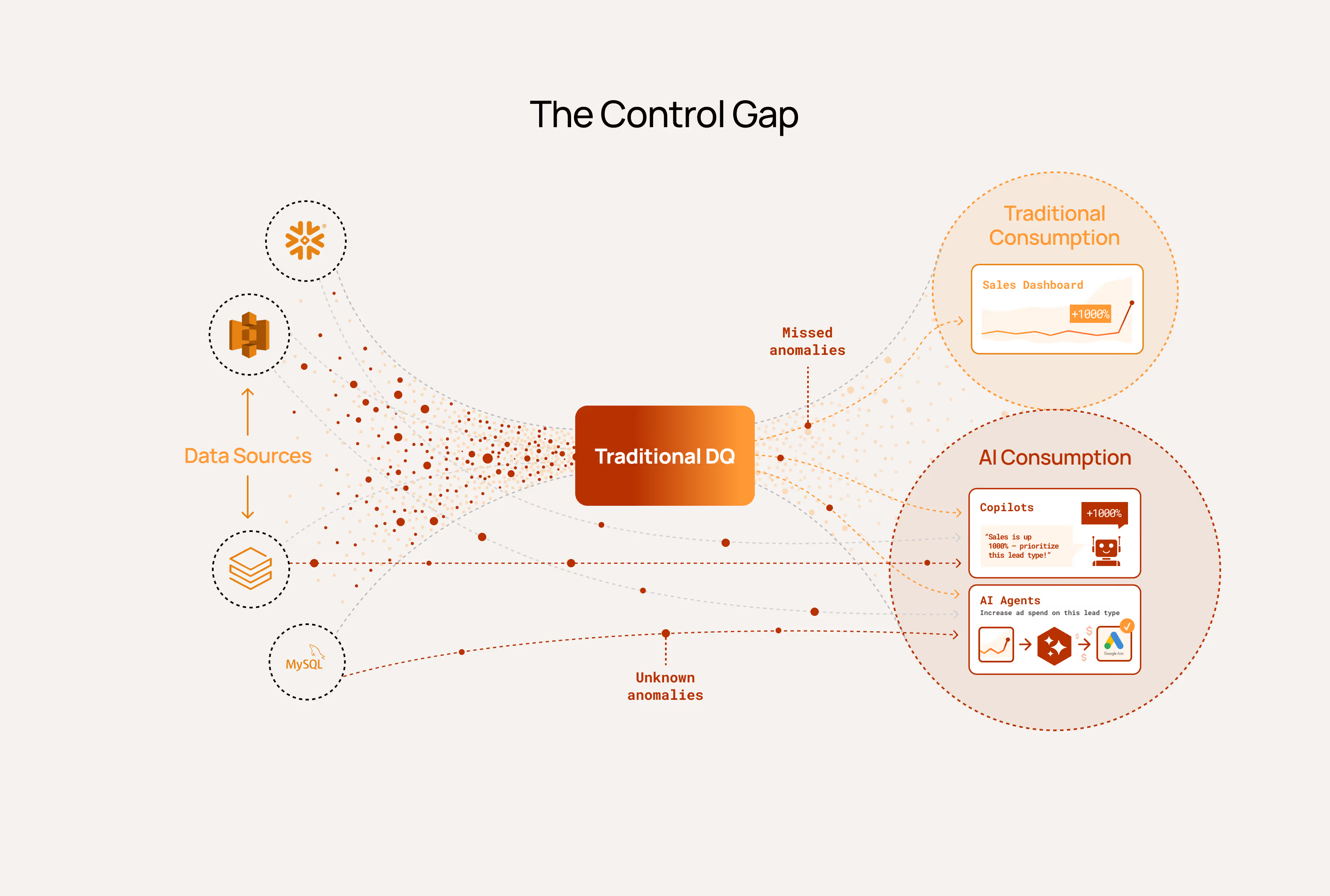

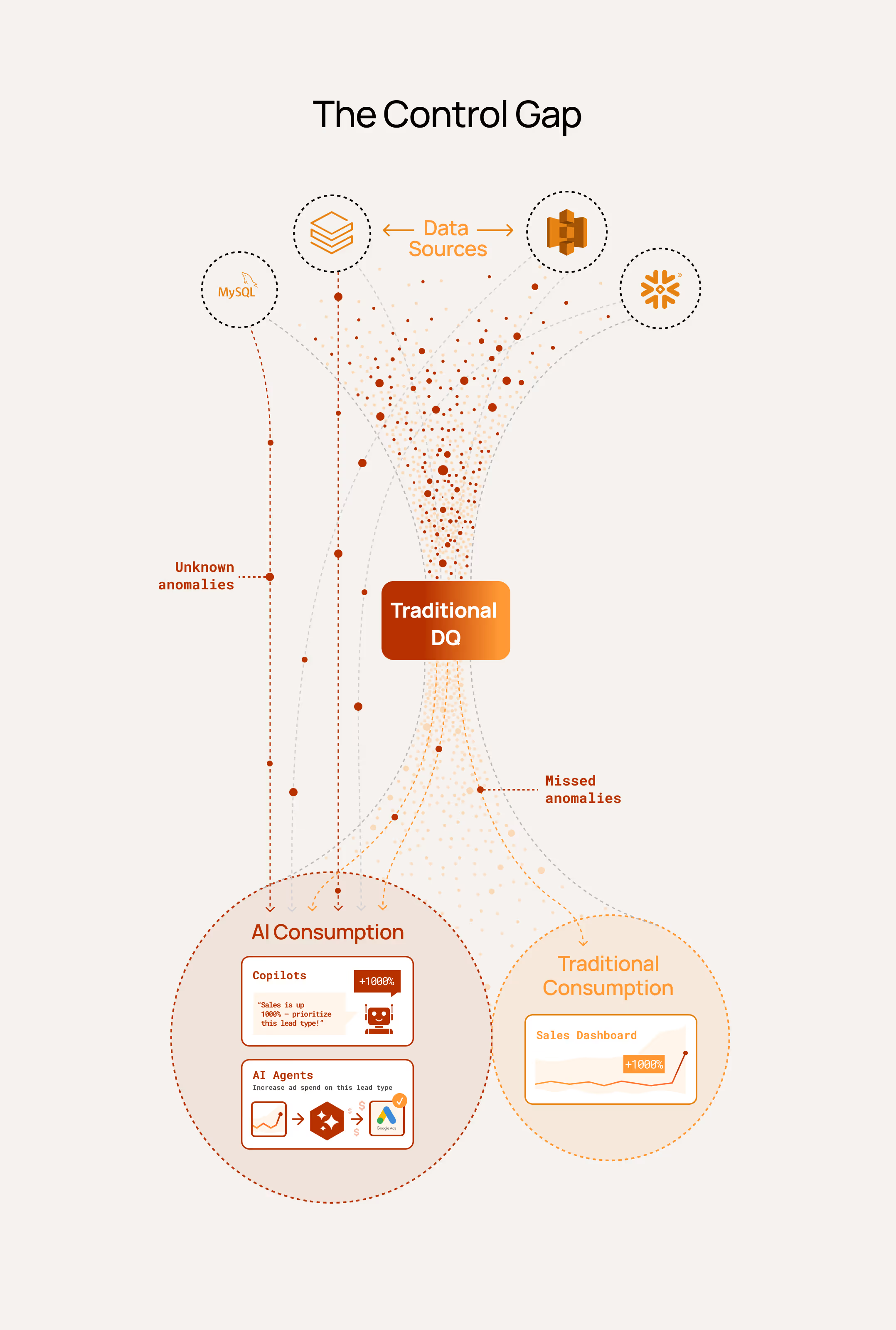

Context without control creates risk.

Every data leader knows the pattern: issues discovered downstream, teams scrambling to diagnose them, decisions already made on bad inputs. Now copilots and agents depend on that same data and context to reason and act, propagating errors at machine speed before anyone notices. Traditional data quality wasn't built to control context at the point of use.

The Solution

The Data Control Layer for Trusted Context

Qualytics enables validate-at-use, where data is evaluated before it drives decisions and applied as a set of controls in real time.

By continuously evaluating data as it is used, Qualytics produces governed signals that determine whether systems should proceed, flag issues, or prevent actions entirely. This ensures the context behind every decision is trusted when it matters.

Augmented Data Quality

AI learns how your data behaves and generates rules automatically, adapting as data evolves. Your teams define what good looks like and guide governance. The result is broad, continuously improving coverage without the manual effort that limits most data quality programs.

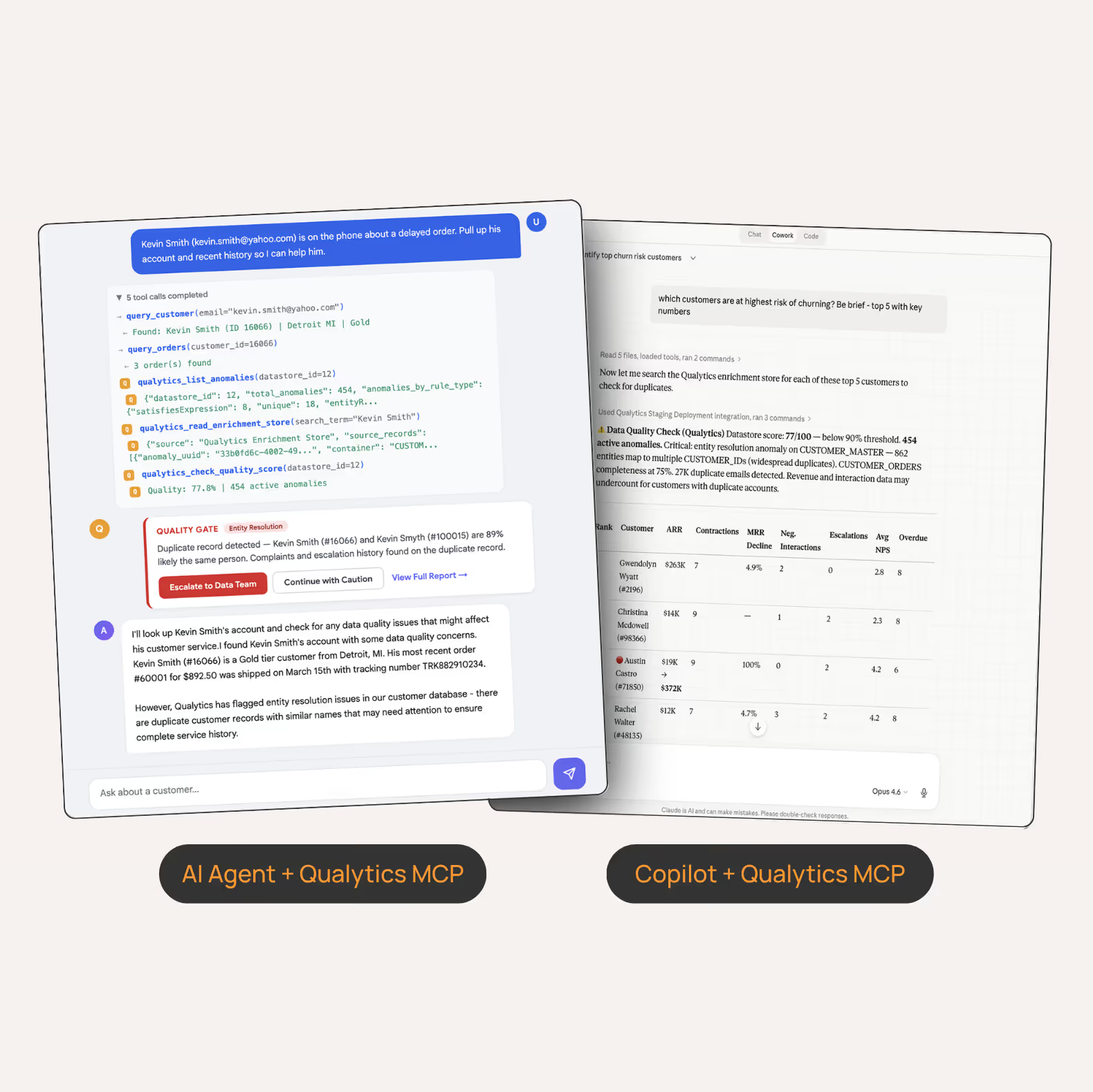

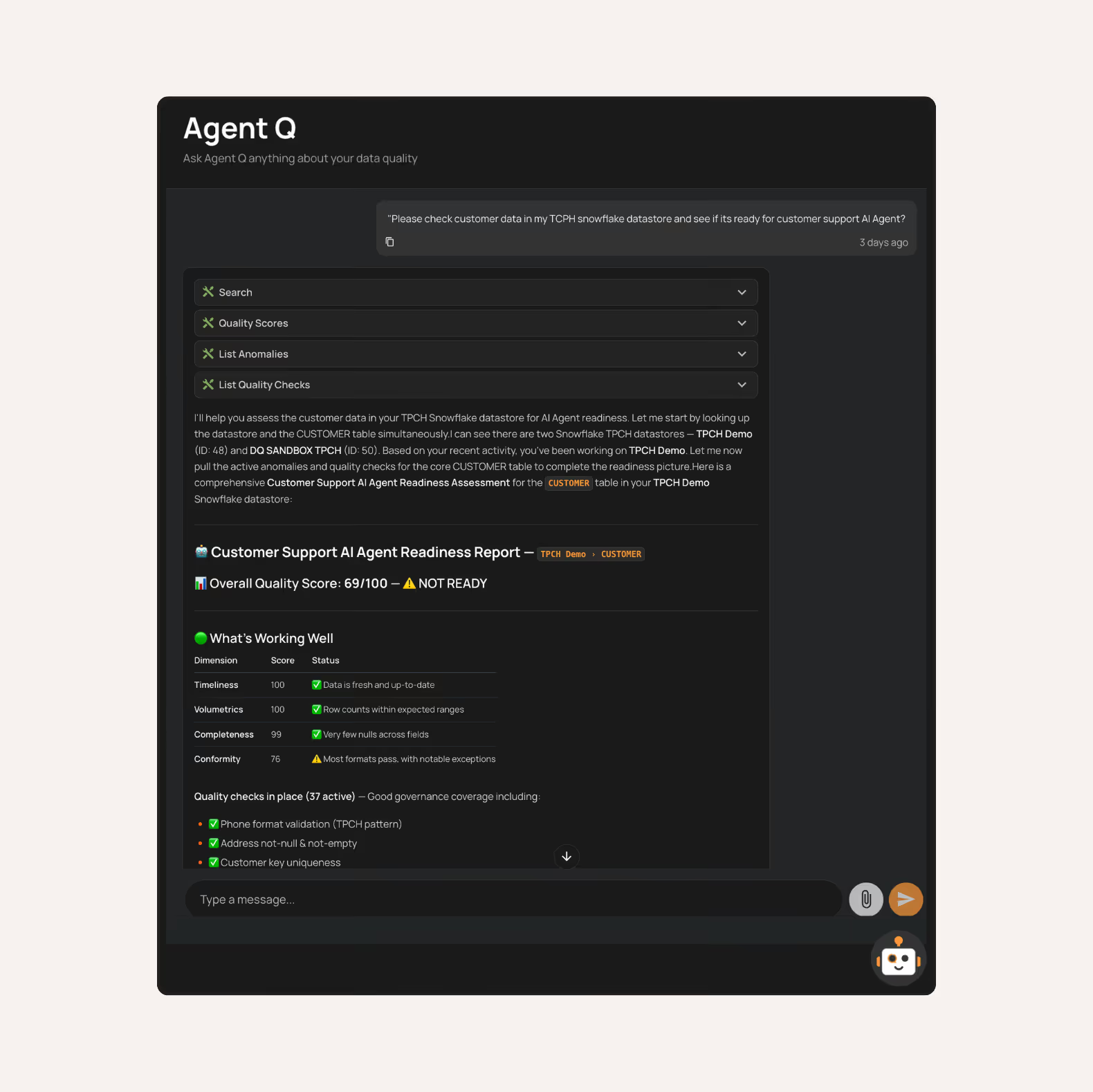

Built for Humans and AI

Business teams, data teams, and AI systems work on the same governed foundation for data quality. Teams investigate anomalies, define rules, and explore metadata through our low-code interface, while AgentQ makes governance accessible through natural language. Copilots and agents access the same governed context without requiring separate systems or parallel workflows.

Trusted Context at Use

Data quality is applied as real-time controls across analytics, applications, agents, and AI workflows, shaping how data is used. Copilots and agents are first-class citizens able to access quality signals through MCP, API, and real-time interfaces.

This makes validate-at-use possible, moving data quality from downstream checks to a system that actively governs how insights are generated and actions are taken.

How It Works

Qualytics operates as a continuous data control layer across how data is created, transformed, and used. It learns how data behaves and maintains coverage as data evolves. Control is applied wherever data is used, guided by business context from your teams.

Step 1

Profile & Understand Your Data

Qualytics connects to your data sources and learns how your data behaves by identifying patterns, relationships, and expected structures.

Step 2

Generate & Maintain Coverage

Qualytics AI automatically infers and maintains data quality rules as data evolves, while teams guide the system with business context.

Step 3

Continuosly Monitor Quality

Data is continuously evaluated against learned patterns and expectations, producing quality signals that determine whether data is fit for purpose.

Step 4

Act on Quality Signals

Quality signals are applied as controls wherever data is used. Humans, copilots, and agents evaluate whether data is fit for purpose before generating insights or taking action.

The Results

Enterprises use Qualytics to operationalize trusted data at scale.

20x ROI

in year one, by automating over 20K data-quality rules

$3.67M

projected savings, reclaiming thousands of engineering hours

4x faster remediation

with 50+ business users resolving anomalies alongside data teams

1.5 FTEs

running a global DQ program, estimated 5x team efficiency

Act on trusted data, every time.

Control the context powering your analytics, AI, and operations.

Discover more insights from the Qualytics team

You can vibecode a data quality tool. You can't vibecode a data quality program.

AI coding tools make standing up data quality validation easy, but running an enterprise-grade program takes years of product discipline.

Data Quality vs Data Control: Why AI Demands Controls

AI removes the human safety net that contained bad data. The data control layer validates data at the moment it's acted on.

.png)

Qualytics Launches Data Control Layer to Govern Context for AI Systems

Qualytics, the AI-augmented data quality platform, today launched the data control layer: a new approach to governing the context AI systems reason and act on.